Why maps matter

With new technologies and an explosion of geodata, more and more agencies are mapping to make sense of their missions.

People used to use maps so they wouldn't get lost. But in recent years, access to the Global Positioning System and the proliferation of mobile technology have made paper-based maps almost irrelevant. Unless you're in uncharted territory, it's hard to get lost anymore. Basic geography is as easy as inputting an address and letting your mobile phone tell you how to get there.

And as mapping technology advances, it allows for far more than foolproof directions. Federal agencies now use geospatial data, geo-analytics and multi-layered maps for myriad purposes, including gathering intelligence, predicting disease outbreaks and sharing data pools with the public.

The allure of mapping lies in its intuitiveness. Even simple "dots on a map can be a powerful way to see trends in data," said Josh Campbell, geographic information system architect for the Humanitarian Information Unit at the State Department. "Maps are a compressed mechanism for storytelling."

Last year, Campbell's office created a series of maps to track the mass migration of Syrians displaced by the country's ongoing violence. The HIU team combined data from thousands of media and internal reports with commercial satellite imagery. Each map provided a geographical snapshot of a place. Together, they showed trends over time and revealed the areas with the most intense conflict.

"That visualization can simplify complex data relationships among variables," Campbell said. "It's one thing to read the information, but I think visualization is a powerful way to consume information that scales beyond reading."

That is perhaps the most important aspect of maps: They make for better decision-making.

The Federal Communications Commission used to convey policy changes through 1,000-page Microsoft Word documents, said Mike Byrne, geographic information officer at the FCC. Now the agency uses cartography to explain complicated policy subjects such as spectrum allocation.

Officials rely on a mixture of open-source and proprietary tools to do that, but the focus is on creating a product that users can easily understand, whether those users are federal decision-makers or members of the general public, Byrne said.

"The platform for us is the Internet," he added. "At FCC, we take really complicated things and display them so that anyone can understand what the high-level landscape view looks like."

'So much easier than words'

The ease with which maps can be created, shared, accessed and understood is why they are reaching the highest levels of decision-making in government. As the mapping technology improves, even Congress is getting into the act.

Legislative committees and even individual lawmakers are hiring GIS experts to make maps that inform and educate policy-makers or enhance decision-making regarding prospective legislation.

Cathy Cahill, a professor in the Department of Chemistry and Biochemistry at the University of Alaska Fairbanks, started a stint as a congressional fellow with the Senate Energy and Natural Resources Committee in January. Within days, Cahill had produced her first map. It detailed the locations of various types of power plants across the country using open data from the Energy Information Administration.

Because Cahill knows Alaska well and because the committee includes Sen. Lisa Murkowski (R-Alaska), much of Cahill's work centers on her home state. One map she produced highlighted the costs remote Alaskan communities sometimes face due to the long distances petroleum must travel from refineries.

"Working with the Senate, we have incredible data from a bunch of agencies and beautiful maps and databases that I can pull from," she said.

However, she isn't staring into a desktop screen of Esri's ArcGIS on her own. She's working with and training committee staffers and sharing her GIS knowledge so that the mapping can continue after her 12-month fellowship is over -- something the legislators increasingly demand.

It's not uncommon to see Murkowski or Sen. Ron Wyden (D-Ore.) using maps on their mobile devices to explain policy or problems to peers or the citizens they serve. Wyden has gone as far as embedding maps in press releases dealing with Medicare reform.

"It's a very integrative process," Cahill said. "I'll show what data is available, and they'll say, 'We want it presented this way.' To present [it visually] is so much easier than words. You're setting up problems, putting topics they are interested in into a map where they can see it spatially and think about why those things occur and where."

The changing technology landscape

Geographers, GIS experts, coders and cartographers are sought-after professionals in the private sector and government alike. Producing high-quality, informative maps requires a complex skill set, but evolving tools, technologies and policies are simplifying certain aspects of map-making.

Esri's mapping software has long been dominant in federal agencies -- integrating with other large data systems and offering a full suite of analytical tools for those with sufficient training. But Tableau Software and Google Maps offer increasingly powerful visualization options for those new to GIS, while ESRI is making its systems more accessible as well. Mapbox's mobile-first approach and open-data focus are winning that Washington, D.C.-based company numerous federal customers, and OpenStreetMap offers a non-proprietary framework and data library that appeal to agencies pushing to open their own geodata.

"Up until recently in geo for government, there really hadn't been a choice," said MapBox CEO Eric Gundersen.

This growing competition in map-making software is good for the government -- for reasons that go beyond cost and learning curves. Esri, for instance, recently made headlines by enabling federal agencies that use the company's proprietary tools to open geospatial data to developers and the public. Individual agencies can decide whether to release their geospatial data in this way, but the Environmental Protection Agency wasted little time in doing so and other agencies are sure to follow.

Officials at the National Park Service are already moving in that direction. "We support lots of proprietary systems, but we started building core parts of our stack around open source, so we've become more nimble and agile in regard to our development," said Nate Irwin, EGIS and web mapping coordinator at NPS. "That doesn't mean we've lost our connection to the traditional GIS world."

Irwin leads NPS' renowned map-building team, which has created road-closure maps for the Blue Ridge Parkway and air-quality visualizations for each national park, among others. In the near future, Irwin wants NPS maps to track park infrastructures in real time at a level so detailed that a ranger could report a grizzly bear sighting in Yellowstone and have traffic routed around the animal in a matter of seconds.

"Technology has changed dramatically, and things that were impossible to do five years ago are almost easy to do now," Irwin said. "We can really focus on the details."

That ability to drill into the details has a lot to do with improvements in computer hardware. Previously, servers and enterprise systems groaned and chugged through storing and computing resource-intensive geodata. Now in all but the most extreme cases, cloud computing has eliminated the hardware problem. Datasets hundreds of terabytes in size or larger -- such as climate change models organized by the National Oceanic and Atmospheric Administration -- can be processed in short order because of on-demand horsepower available via the cloud.

Storage and computing capacity are not the issues anymore, said Jeff Peters, director of national government sales at Esri. "Complex cloud systems have sprung up, and the elasticity and scalability of the cloud is really what's driving this," he added. "You have the complex tools that leverage the horsepower and the analytical tools that sit back in data centers. The challenge becomes how best do we enable these tools and ask the sophisticated questions."

Disaster prevention and response

Some agencies are using maps to address increasingly complex problems. Disaster response and emergency planning teams descended on New Jersey in preparation for the Super Bowl in February. Local fire department personnel inspected critical facilities near the stadium, and officials digitally scanned documents related to water hookups, the locations of hazardous materials and building blueprints to create a complex, multi-layered mapping system that authorized users could access on mobile devices.

In addition, maps often include real-time weather feeds and camera imaging, said Russ Johnson, Esri's public safety director. The company often partners with the Federal Emergency Management Agency during disasters.

"Not only do you have the single view of what is happening in time, but if you have the appropriate credentials, you and other stakeholders can log on and be connected to the same view of data for shared situational awareness," he added.

Disaster response has also spurred geodata-based applications that any user with access to GitHub can download and run in the cloud for a few dollars. Mitre, a nonprofit organization that operates research and development centers sponsored by the government, developed GeoQ for the National Geospatial-Intelligence Agency as Hurricane Sandy bore down on the East Coast in late 2012. The app allows analysts to compare real-time images after a disaster with existing satellite imagery to conduct damage assessments and provide other valuable information. It also provides a Rosetta Stone of sorts for geodata, allowing users to import, convert and combine virtually all the commonly used formats.

The app has thousands of federal users, 2,000 of whom used it during the Boulder, Colo., flooding in September 2013, said Jay Crossler, senior principal software engineer at Mitre. Yet anyone can download the code and run it on a virtualized machine in the cloud for about $14 a year.

"We've made it all available so that anyone can set it up," Crossler said.

The importance of context

Not surprisingly, the intelligence community is at the forefront of geospatial technology and mapping, led by NGA. The agency is in the early stages of building its Map of the World, an internal platform for all the geo-intelligence and multisource content the agency collects for the intelligence community. At a geospatial conference hosted by Esri in February, NGA Director Letitia Long said the agency's goal is to have analysts fully immersed in an information environment that is enhanced with the latest visual, auditory and tactical tools by 2020.

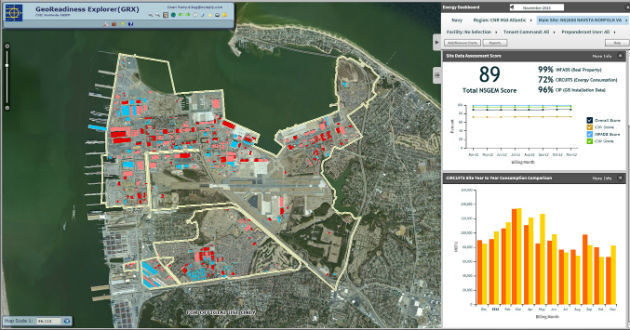

The Navy maps energy consumption at Naval Station Norfolk and other installations, monitoring trends and visualizing opportunities to economize. (Esri image)

NGA's budget, which has doubled to $5 billion in the past 10 years, highlights the increased importance geospatial data plays in national security. And the agency collects petabytes of data via various platforms. To use and share it effectively, officials have to give it context, which can be a challenge if the data lacks geospatial attributes.

"How do you put context to documents that don't have geospatial latitude and longitude in them? How do you disambiguate?" asked Michael Walsh, senior director of Virginia Intelligence Programs at Intelligent Decisions, which has IT contracts with several intelligence and defense agencies. "That's a big piece to making sense of information."

"Maps aren't pieces of paper anymore," he added. "They are layers on top of each other. Geography, weather patterns, crops, migrations of people -- those are all layers."

Whether they show dots on a map, trends over time, real-time situational awareness or possible policy implications, maps have a growing importance in government. In the past decade, technology has allowed for the production of better maps while simultaneously improving the consumption of the information they provide, down to nearly every mobile and Web-connected device. People used to use maps so they didn't get lost. Now we use them for almost everything.