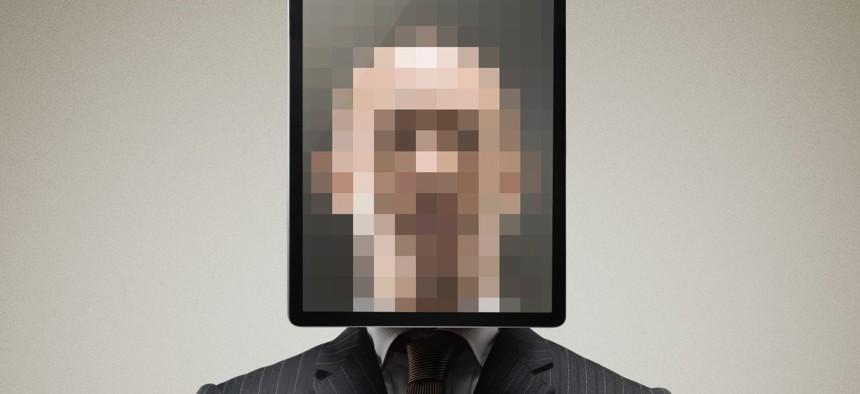

DHS wants answers on how well digital identity systems work

The Department of Homeland Security's Science and Technology Directorate is testing whether identity documents can reliably be verified through new technology. Yagi Studio / Getty Images

The Science and Technology Directorate is moving forward with another round of testing for its remote identity validation technology demonstration.

The research arm of the Department of Homeland Security is moving forward with ongoing testing to evaluate the effectiveness of remote identity validation technologies.

The hope is that DHS can answer basic questions about how well these technologies work.

DHS’ Science and Technology Directorate said Tuesday that it would conduct new tests that focus specifically on the ability of systems to match selfies to photos on identity documents, a process meant to weed out any fraudsters trying to use stolen IDs.

They’re taking applications from tech developers who want to participate through June 22.

The so-called remote identity validation technology demonstration also includes tests on the efficacy of systems meant to authenticate identity documents like driver’s licenses as valid by assessing a photo of them, said Arun Vemury, the lead of the directorate’s biometric and identity tech center.

Future tests will look at so-called liveness tech meant to confirm that it’s a real person on the other end of the screen submitting identity documents.

“There’s a lot of questions we just don’t really have good answers for right now,” said Vemury, ticking off the unknowns.

“Does this really work? Or, how well does it work? If we do this on a massive scale, does that open the door for fraud if we don’t actually have a good understanding of how this actually works,” he said. “Will it work for all people equally? Do they have to have the newest, latest and greatest phones for it to work well?”

The ongoing research is happening as these types of remote identity technologies have been garnering more attention in the government space, where they are sometimes used to vet applicants for government benefits.

The use of identity proofing technology that utilizes selfie matches against identity documents has proliferated across the unemployment system, for example, in an effort to suppress increases in fraudulent claims during the pandemic.

But remote identity proofing is also used in other contexts to check people trying to open a bank account or verify a social media account.

Still, the use of facial recognition has been fraught, with advocates and even some government watchdogs expressing concern about the equity implications of the use of facial recognition systems.

And getting a system to clear a standard for identity proofing set by the National Institute of Standards and Technology is currently most easily done with a biometric like facial recognition. NIST is currently revising the standard and appears to be planning major updates on the use of facial recognition while investigating potential new options that don’t use the technology at all.

All this is happening as the landscape continues to shift in terms of the threats these technologies are trying to address and how remote identity-proofing tech is used, Vemury said.

“A lot of the security features in the ID documents are in different wavelengths of light… and you need special lighting sources to be able to even see them,” he said. “So the question then is, when I take a photo with my smartphone, which wasn't really meant to take photos of IR or infrared or UV light, and now we're trying to detect the security features… Are we really getting the security that we expect out of these documents when we use it in this new type of process?”

How bad actors might try to evade liveness detection tests is changing quickly as well, said Vemury, pointing to 3D printers, digital inject attacks where fraudsters bypass the camera altogether and high-resolution screens.

The tests will look at both how well these sorts of remote identity proofing technologies recognize bad actors and how often they accidentally tap a legitimate person as a fraudster, said Vemury.

The stakes are high for both.

In terms of fraud, online programs and systems potentially open up the door for sophisticated bad actors to do more attacks more quickly than they would be able to with the restrictions of defrauding programs in person, said Vemury, pointing to the banking context as an example, where operating online might be easier than going to defraud many, individual bank tellers.

And when legitimate people are flagged as fraudsters by the systems, that’s the difference between accessing a government benefit easily after the first try to be identity proofed, or not.

How many legit people are getting caught up in systems is another unknown, said Vemury.

“Some people will report out, ‘We accepted X percent of transactions or X percent of transactions were successful,’” he said. “But we don't know if the remainder were because of fraud or legitimate people who just weren't able to complete the steps for whatever reason or the software wouldn't work for them.”